Why AI Models Quietly Degrade After Deployment - Even When Nothing ‘Breaks’

In many factories, AI models are treated like set-it-and-forget-it tools. Once a predictive model is deployed to monitor production quality or optimize machine schedules, it often receives minimal attention - unless something visibly fails. But what many manufacturers don’t realize is that even when the system appears to be working, its performance can slowly degrade over time. This degradation is subtle, quiet, and often unnoticed until it starts impacting production outcomes.

Understanding why this happens is critical. Without it, factories can trust AI models that are technically “running” but no longer delivering the predictions they were built for.

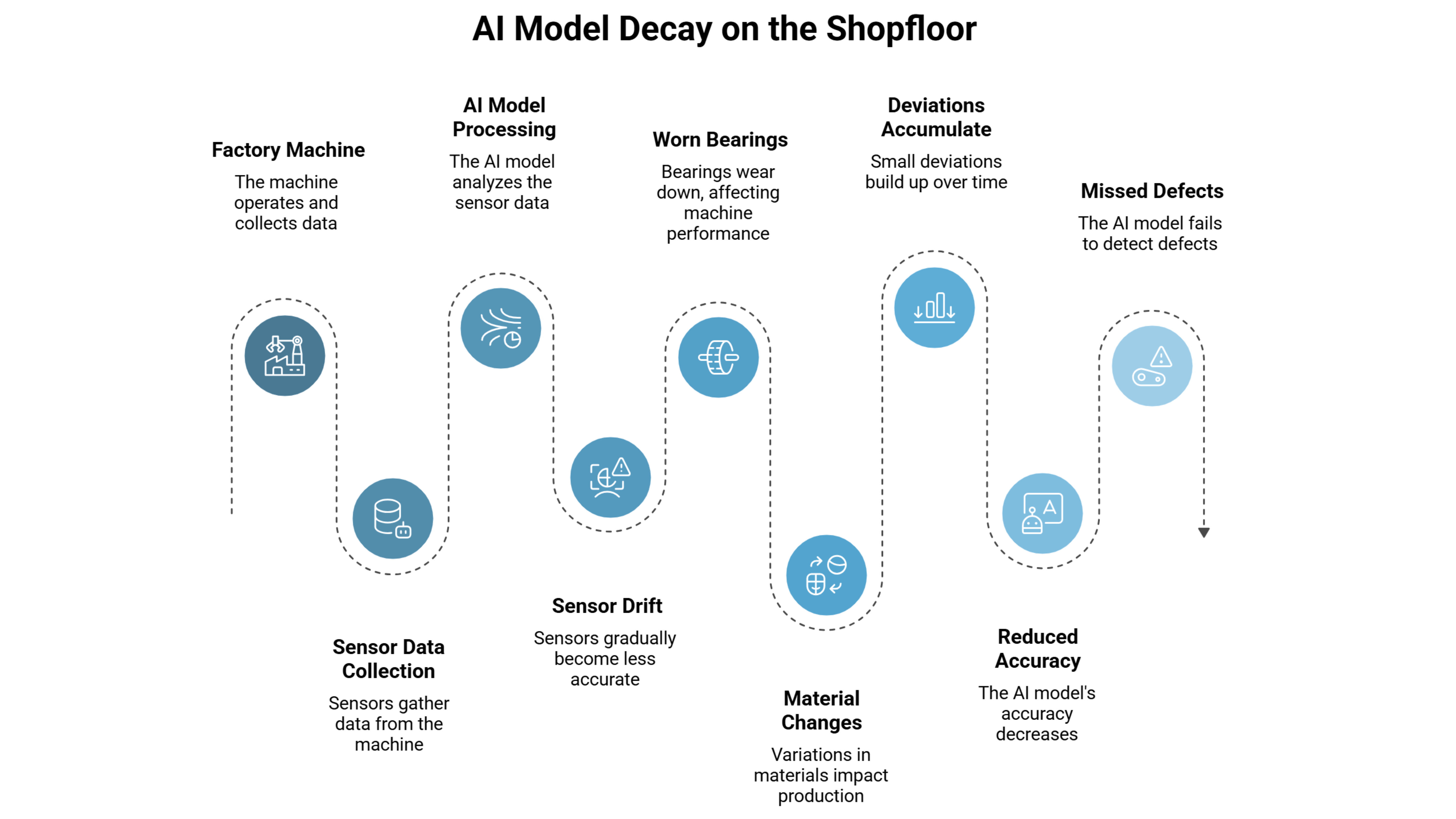

The Hidden Problem: Slow Decay on the Shopfloor

Imagine a model deployed to detect defects in metal castings. On day one, the model achieves 95% accuracy, flagging almost every flaw. The production team celebrates - the AI seems flawless. A few months later, engineers notice a gradual increase in defective outputs slipping past the system. The AI isn’t broken. The software hasn’t crashed. But its performance has quietly eroded.

This isn’t a rare occurrence. In real-world manufacturing, models often lose predictive accuracy by 5-20% within the first six months. In highly regulated environments, like automotive or electronics assembly, even a 5% slip can mean more rework, increased scrap, or safety risks.

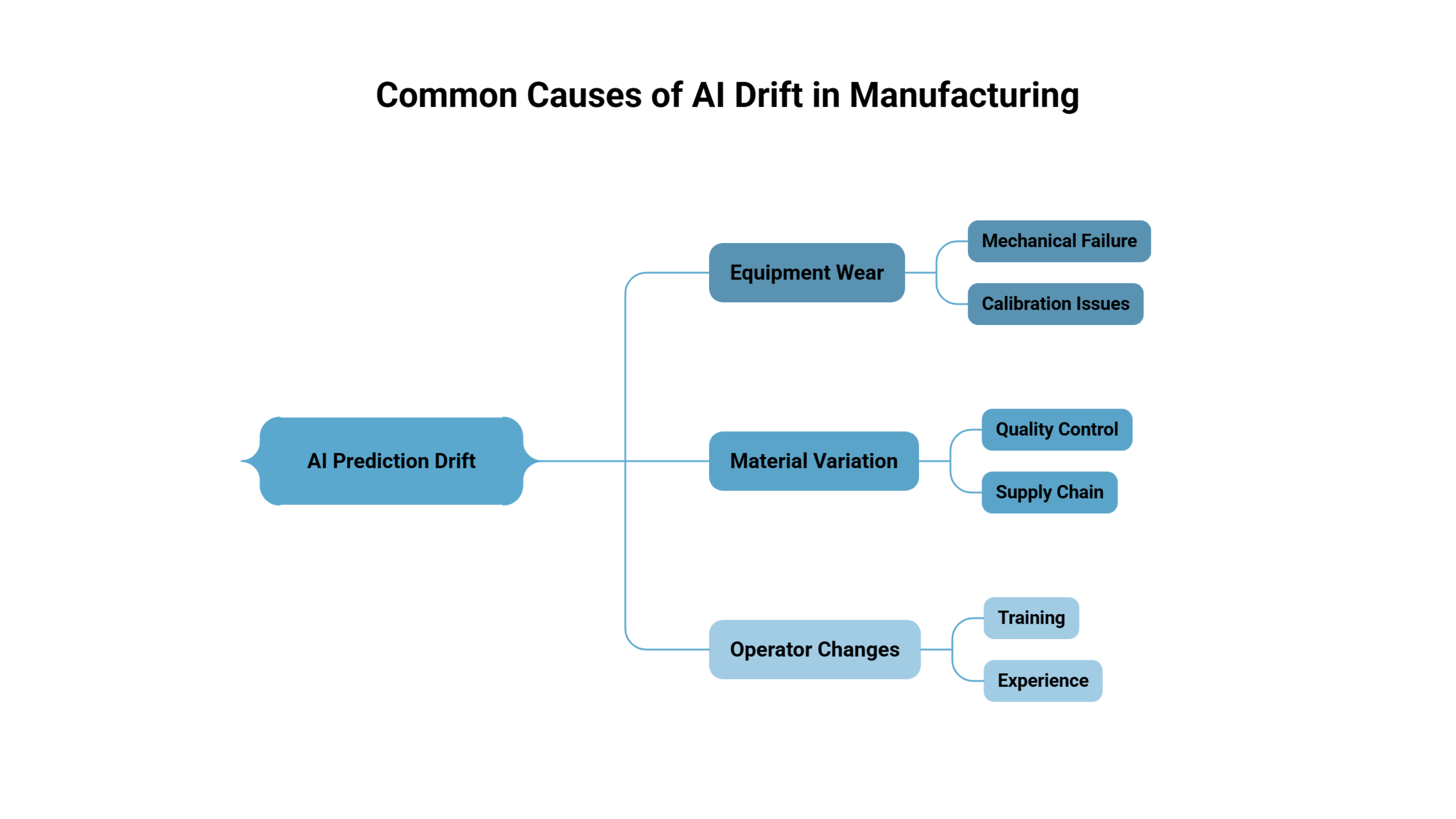

Why It Happens: Shifting Realities on the Shopfloor

The core reason for slow AI decay is simple: the world the AI learned from changes over time. Models are trained on historical data - machine readings, defect logs, environmental conditions - but factories are dynamic systems. Several factors contribute to this drift:

1. Equipment Wear and Process Drift

Machines age. Bearings wear, temperature sensors drift, or conveyor belts stretch. A model trained on ideal machine performance will gradually see input data it wasn’t trained for. The cause → effect → consequence is clear:

- Cause: A temperature sensor begins to read 2-3°C higher than actual.

- Effect: The AI misinterprets this as normal process variation.

- Consequence: Defects that would have triggered a warning are now missed.

Over months, minor wear accumulates into a measurable drop in predictive accuracy.

2. Raw Material Variation

Even with tight supply chains, raw materials fluctuate. Steel composition, plastic polymer blends, or chemical concentrations vary slightly between batches. A model trained on one set of material characteristics assumes consistent input. When these inputs drift:

- Cause: A supplier delivers metal with slightly higher carbon content.

- Effect: Casting defects appear differently than in the training data.

- Consequence: The AI misses subtle flaws, leading to quality issues downstream.

3. Subtle Operator Changes

Human behavior on the shopfloor isn’t static. Operators may adjust machine speeds, introduce small manual tweaks, or skip minor calibration steps. AI systems rarely account for these micro-behaviors.

- Cause: Experienced operators adjust machine timing to reduce vibrations.

- Effect: Sensor readings shift outside the model’s expected range.

- Consequence: AI signals less accurately, producing false positives or negatives, creating trust issues with staff.

Why This Problem Often Goes Unnoticed

A big reason AI decay is overlooked is how success is measured. Factories monitor obvious failures: crashes, errors, or complete misclassification. Slow degradation is silent:

- No red flags appear in dashboards.

- Outputs are “good enough” for operators to ignore.

- Management assumes the model is still performing at day-one accuracy.

Real Consequences in Manufacturing

The downstream effects of slow AI decay are tangible:

- Increased Waste: Defects slip through, causing rework or scrapped parts.

- Reduced Efficiency: Production slows because operators spend more time double-checking AI predictions.

- Trust Erosion: Workers lose confidence in the AI system, undermining adoption.

- Hidden Costs: Maintenance and troubleshooting spike, often without anyone realizing that the root cause is model drift.

In one automotive plant, a defect-detection model dropped from 96% to 88% accuracy over four months. While no system alarms were triggered, this small decay caused a measurable rise in rework costs, which management initially attributed to raw materials rather than the AI.

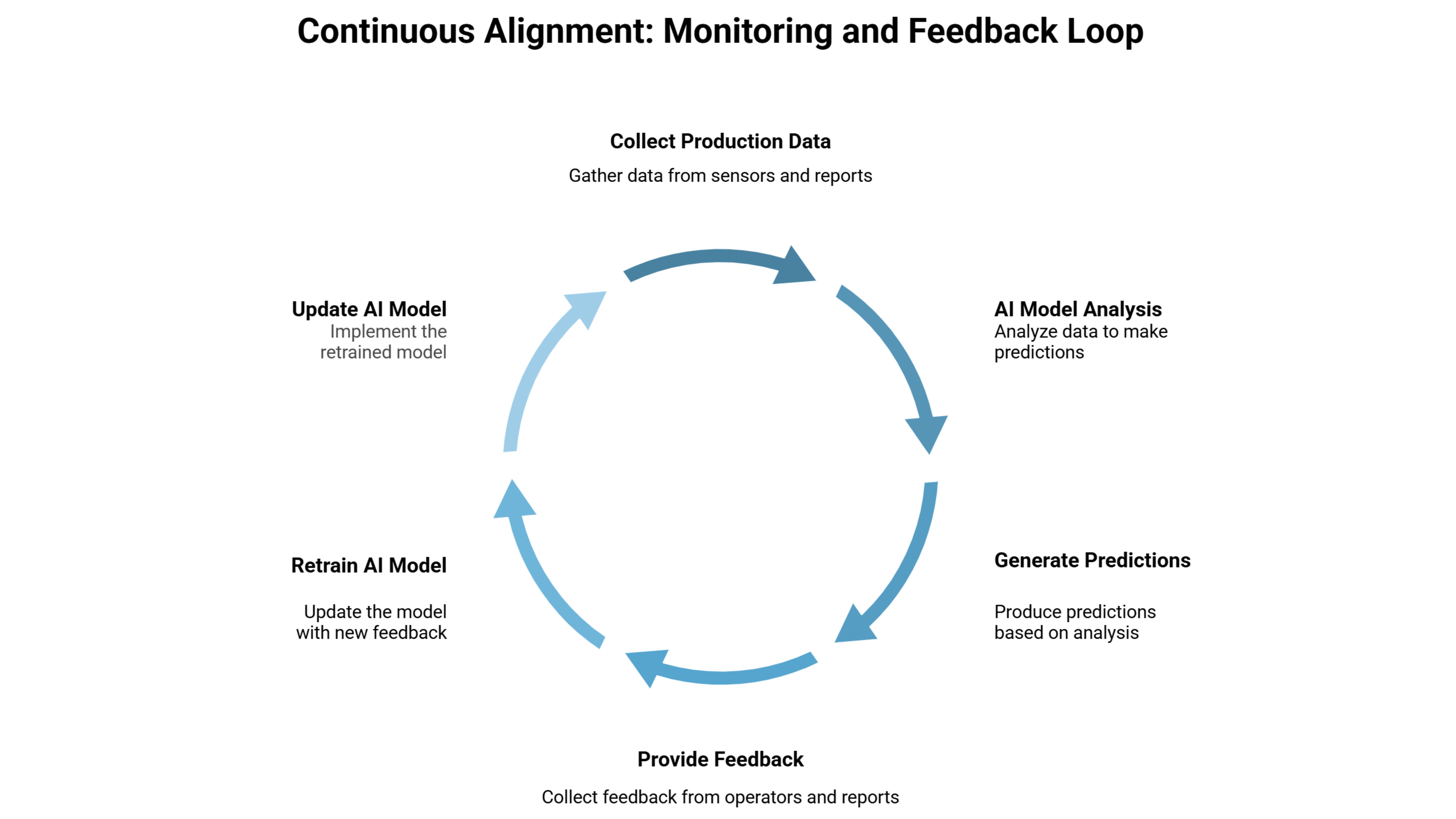

Tackling the Problem: Continuous Alignment

AI models don’t stay reliable on their own. Once deployed, they need visibility into how real production conditions keep shifting. Teams that consistently monitor model performance on the shopfloor are far more likely to catch early signs of drift before quality or output is affected.

- Regular Data Monitoring: Track inputs for shifts in sensor readings, material properties, or operator behavior.

- Scheduled Model Retraining: Update models periodically with fresh data reflecting current conditions.

- Integrated Feedback Loops: Feed defect reports or operator insights back into model refinement.

Systems like Seewise.AI can support this by capturing and analyzing production data over time, but the core requirement is simple: continuous attention to how real conditions drift from the data the model was originally trained on.

Why Deployment Isn’t the Finish Line

AI in manufacturing doesn’t fail spectacularly; it fades quietly. Without attention, a model that once delivered near-perfect predictions slowly becomes less reliable, even when “nothing breaks.” Recognizing the subtle signs of performance decay - sensor drift, material variation, and operator behavior changes - is essential.

The takeaway for manufacturers is clear: deploying an AI model is not the finish line. It’s the start of an ongoing process to ensure the AI keeps pace with a shopfloor that never stops changing. Ignoring this can turn a trusted tool into a hidden liability - quietly, month by month.