When Manufacturing AI Fails, It’s Rarely a Model Problem

When AI deployments in manufacturing fail, the first assumption is often that the technology is at fault - the model isn’t trained properly, the dataset is too small, or accuracy is low. But real-world failures are rarely about the model itself.

On the shopfloor, AI interacts with humans, processes, machinery, and production pressure. It operates inside an ecosystem shaped by deadlines, shift schedules, and operational trade-offs. Failures often emerge from system design, operational constraints, feedback loops, and human behavior rather than technical flaws. Understanding these dimensions is essential for long-term adoption.

AI does not operate in isolation. It becomes part of a workflow. And workflows are messy, adaptive, and constantly changing.

Let’s explore five common areas where manufacturing AI struggles and why.

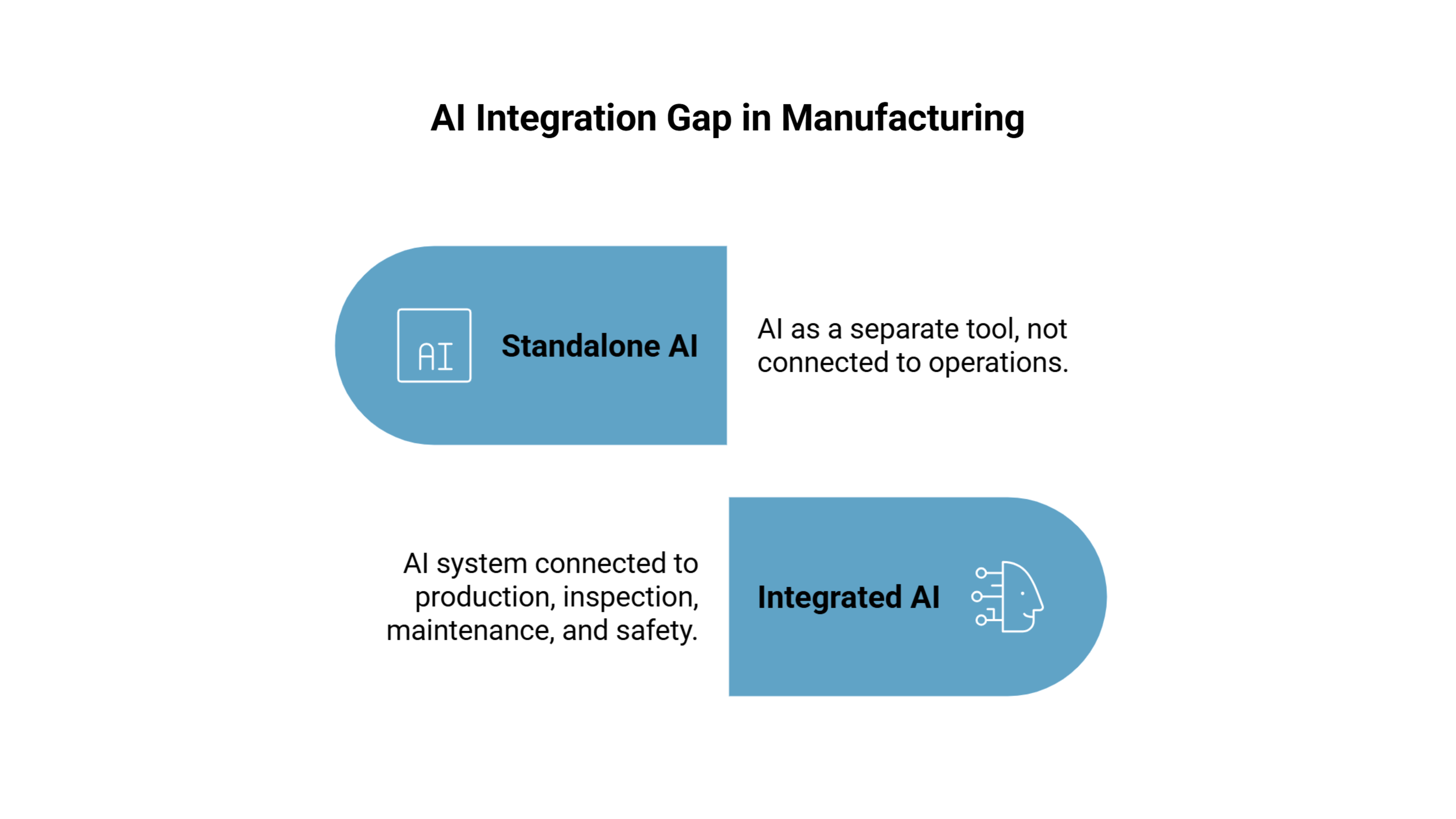

1. Integration Gaps: AI Should Fit Into Existing Workflows

Even a technically strong AI system can fail if it doesn’t integrate with factory operations. Alerts delivered to a standalone dashboard may never be noticed. Data may not sync with shift reports, incident logs, maintenance schedules, or ERP systems.

For instance, a quality inspection AI that detects assembly defects might generate real-time alerts. But if those alerts don’t automatically create tickets or appear in the production management system, supervisors treat them as “another dashboard” rather than actionable signals.

In high-volume environments, small inefficiencies compound quickly. The same pattern appears where maintaining consistency at scale becomes just as critical as accuracy. AI must reduce friction, not introduce it.

Integration determines whether AI becomes infrastructure or just an experiment that fades over time.

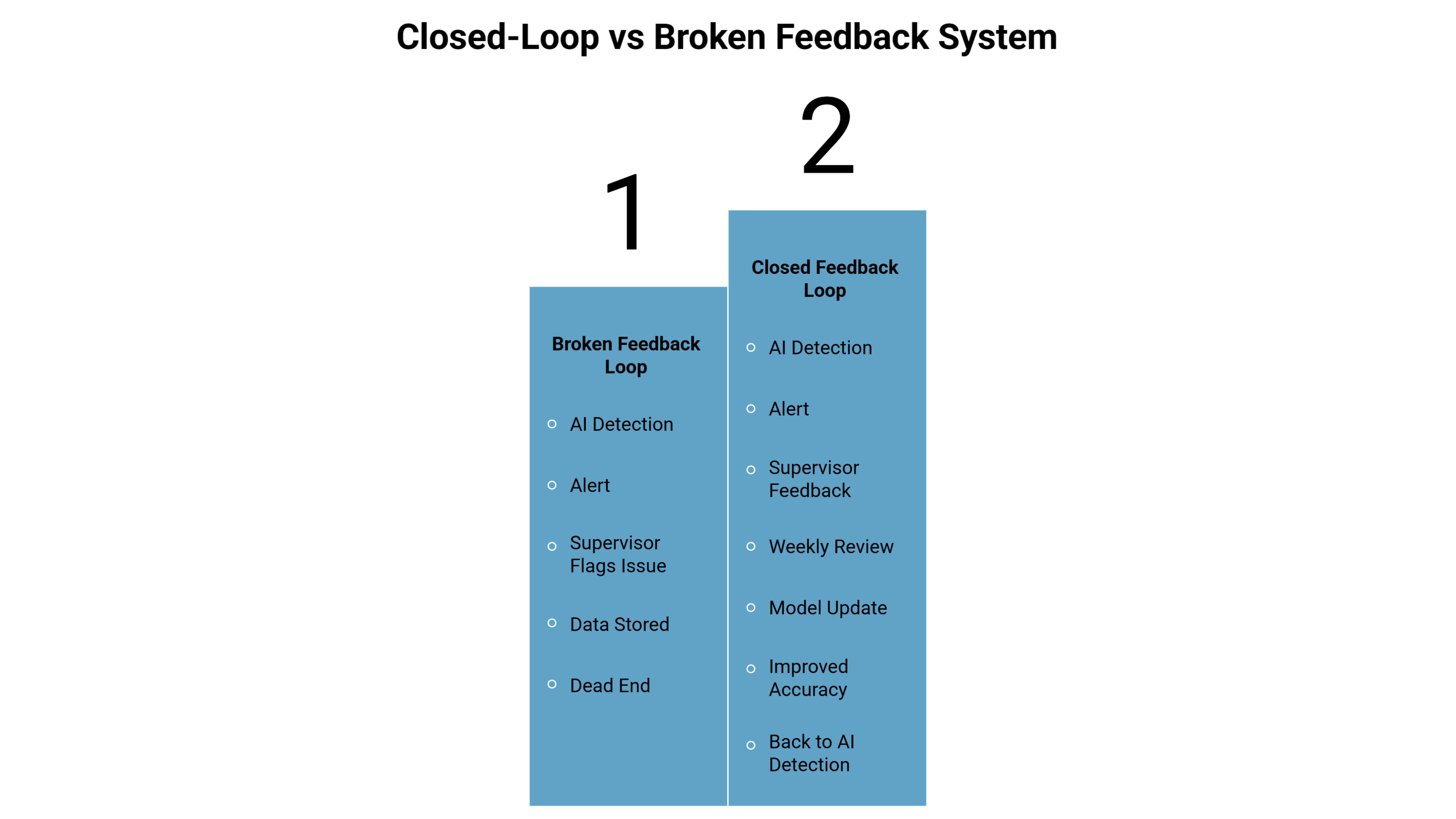

2. Feedback Loops That Don’t Close

AI cannot succeed on a “set it and forget it” basis. Manufacturing environments evolve. Materials change. Processes are adjusted. Equipment ages.

If alerts are flagged as incorrect but the data isn’t reviewed or used for retraining, the system stagnates. Supervisors see repeated mistakes. Trust erodes gradually - not dramatically - but enough to reduce engagement.

Take predictive maintenance as an example. A system may flag equipment at risk based on vibration patterns. If technicians dismiss certain alerts as irrelevant but that information is never fed back into the system, improvement stalls. Eventually, users stop relying on recommendations altogether.

A structured feedback cadence - weekly reviews, edge case discussions, incremental retraining - transforms AI from static automation into a learning system. Without it, performance drifts silently.

3. Operational Constraints: The Reality of Production Pressure

Factories operate under constraints that AI teams sometimes underestimate. Production targets don’t pause for model retraining. Supervisors don’t gain extra hours for labeling data. Maintenance teams already operate at capacity.

Consider a computer vision system for safety compliance. Weekly retraining sounds manageable in theory. In practice:

- Who collects edge cases?

- Who labels them accurately?

- Who validates deployment?

- When does this happen without disrupting shifts?

If these questions are not answered upfront, AI slowly degrades. Not because it is weak - but because it is unsupported.

Operational alignment is not a technical detail; it is a survival requirement. AI systems must be designed around the cadence and constraints of production, not idealized workflows.

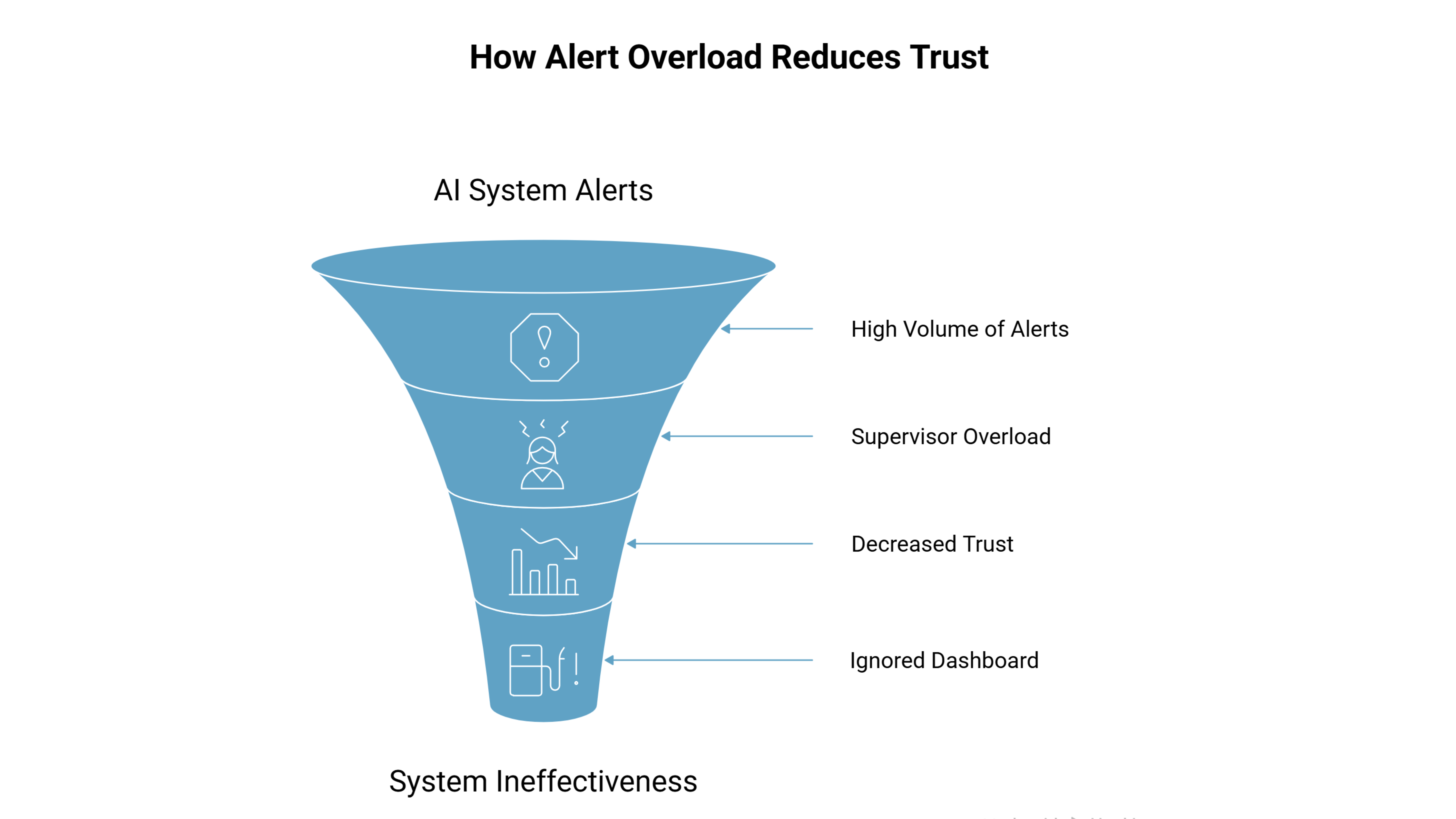

4. Human Behavior & Workflow Adaptation

AI systems influence behavior. Workers adjust. Supervisors adapt. Some changes are positive; others are unintended.

Workers may comply with safety requirements only in camera-monitored areas. Operators may find ways to work around systems they perceive as slowing output. Supervisors may ignore dashboards if alerts feel repetitive or unclear.

A quality monitoring AI might correctly flag defects, but if it increases review time without reducing rework downstream, operators may see it as friction rather than support. Adoption is not automatic - it is earned through usability and trust.

This is where alert design matters. Excessive notifications create fatigue. Ambiguous alerts create confusion. Lack of ownership creates inaction.

AI must support human decision-making, not overwhelm it.

5. Environmental Variability: The Physical Layer

Factories are dynamic environments. Lighting changes between shifts. Dust accumulates on sensors. Machinery vibration shifts camera alignment. Raw materials vary in texture and color.

Even if the model itself is technically sound, environmental changes affect inputs. A helmet detection system may perform reliably in controlled conditions but struggle during night shifts or in high-dust zones. A maintenance model trained during one operating season may behave differently during temperature fluctuations.

Environmental variability is not an edge case, it is the norm. Systems must account for calibration, monitoring, and adjustment over time. Ignoring this layer guarantees drift.

Strategies That Make Manufacturing AI Work

The difference between adoption and abandonment is system thinking.

Controlled Alerts - Prioritize high-confidence detections and high-risk scenarios. Reduce noise before it reaches supervisors.

Tight Feedback Loops - Make it simple to flag incorrect alerts, review patterns, and retrain incrementally.

Clear Ownership - Assign accountability for monitoring performance, reviewing drift, and coordinating updates. Without ownership, AI becomes everyone’s tool and no one’s responsibility.

Alignment with Constraints - Allocate time for labeling, ensure infrastructure supports retraining, and embed AI outputs into existing reporting systems.

Many improvements come not from changing algorithms but from refining workflows, clarifying roles, and improving integration.

The Shift from Model Thinking to System Thinking

Manufacturing AI fails rarely because the technology is weak. Failures arise from:

- Integration gaps

- Ineffective or missing feedback loops

- Operational constraints

- Human behavior and workflow adaptations

- Environmental variability

AI in manufacturing is a socio-technical system embedded in a complex, high-pressure environment.

If your team is evaluating real-world deployment challenges or looking to improve system design, you can connect with our team to discuss practical implementation realities.

Treat AI like a model problem, and it will fail like a system problem. Treat it as part of the system, and it stands a real chance of long-term adoption.